eventChemistry ™ .

Information Assurance through

Informational Transparency

Paul S. Prueitt

December 24, 2001

First Report on eventChemistry

(December 17th, 2001)

(October 29th note: The author has

taken a leave of absence from business activities and is redeveloping

OntologyStream materials to be absent any business sells pitches. However, this process will take a

while. The reduction of web pages to

the essential logical and scientific elements is a great pleasure. )

Part 1: The avoidance of stratification

Let us review readings in

theoretical linguistics.

There is in these readings the

language of "double articulation of language". In particular if anyone has John Lyons

"Introduction to Theoretical Linguistics" Cambridge University Press ..1968.. you might read the following:

"Linguists sometimes

talk of the 'double articulation' (or ‘double structure’) of language; and this

phrase is frequently understood mistakenly, to refer to the

correlation of the two planes of expression and context. What is meant is that the units on the

‘lower' level of phonology (the sounds of a language) have no function other

than that of combining with one another to form the ‘higher' units of grammar

(words). It is by virtue of the double

structure of the expression-plane that languages are able to represent

economically many thousands of different words. For each word may be represented by a different combination of a

relatively small set of sounds, just as each of the infinitely large set of

natural numbers is distinguished in the normal decimal notation by a different

combination of the ten basic digits."

page 54.

From this distinction between

substance and form, springs the school of linguists founded by de Saussure.

The Peircean School of semiotics

has a similar distinction that can be seen in the statement of Peirce's Unified

Logical Vision (ULV). The ULV is paraphrased

as:

"Concepts

are like chemical compounds, in that they are composed of atoms".

This distinction seems quite

available to students and scholars in Russia, but not in the United

States. In Europe there is a mixed appreciation

of this type of stratified thinking.

A mistake is typically made in thinking about correlation

between atoms and compounds. This specific mistake leads to the class of

methods called Latent Semantic Indexing (LSI). LSI is used in text understanding systems. In fact, LSI is often applied to obtain a

correlation between words and paragraphs.

This is how the matrix in LSI is set up. The mistake treats the atoms and the compounds as having an

equivalent "ontological" status, when they in fact do not.

The experimental research on the relationships between

phonology and semantics has clearly established the fact that form and

substance do not have the same ontological status in natural language use. The framework consequent to experimental

work on double articulation impacts second language acquisition research, and

this research in turn is linked to research on perception and human visual

system, including the perception of color.

Double articulation presents a

challenge to scientific reductionism. Scientific reductionism simply cannot be

justified if double articulation is accepted.

The reason why it cannot be justified is that form (from which meaning

is most directly derived) is simply missing at the level of the substance that

fills this form. To account for double

articulation, we must move from a simple theory of physical science to a

complex theory. Complexity theory

postulates that no part of the physical world, or its natural law, can be

reduce to a set of token and rules acting on these tokens. Stratification is one way to organize the

processes in the physical world into organizational levels.

If the physical world is modeled

as if stratified then the issue of substance and form can be treated in a new

light. If we look from one level of

organization it is easy to be confused by what is substance and what is

form. From the perspective of the level

of expression, the substance BECOMES the form because the atoms from the lower

level are subsumed and no longer exist as a thing; at that level of

organization. So, as in quantum

mechanics, there are things that do not properly exist from the perspective of

an organizational stratum. Bell’s

theorem (in quantum theory) sets this fact in stone.

This stratified theory is not so

simple to understand. However, the

stratified theory is vital to the development of reasonable ontology

apparatus.

Success can be found from the

performance of stratified machine intelligence.

1)

Stratified

theory leads directly to a simpler from of Artificial Intelligence (AI) that is

radically more powerful when measured using performance metrics.

2)

Stratified

theory separates the now difficult and unsolvable problems in Information

Assurance (IA) into a complex part (human motivation) and a simple part (the

computer science of invariance detection, event detection and incident

management). Using Referential

Information Bases, or RIBs, the simple part is completely solvable

(conjecture).

3)

Stratified

theory simplifies all forms of physical science by discarding the centuries old

reductionism philosophies.

In 2002, Don Mitchell and Paul

Prueitt developed the first three OSI Browsers. These browsers applied new principles from computer science,

ontology architecture and what one might call Perceptual Knowledge Management. The fourth Browser, generalFrameworks, allowed

the development of structured ontology for the purpose of profiling the

elements of text collections. (Not

completed as of October 2002).

Perceptual Knowledge Management

starts with the notion of a finite state machine and a means by which a human

can easily make transforms on this finite state machine. This is the first step toward an Many to

Many (M2M) communications device.

In addition to opening access to

eventChemistry through the OSI Browsers, the Event Browser itself will have a

standard export and import process that allows a stratified objectSpace. This import/export provides academia with a

primary event chemistry research tool.

The objectSpace has two levels of abstractions. One of these levels corresponds to atoms

defined by human conjecture regarding the meaning of correlation between data

invariance. The elements of invariance

are elementary event atoms in event logs.

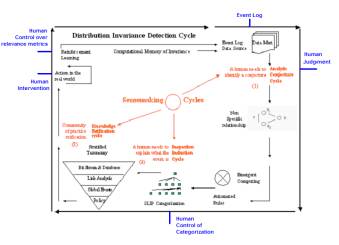

Figure 1: The SenseMaking environment for SLIP

The second level of abstraction corresponds to the emergent computing that resolves a bag of atoms into a pictorial graph (see the logo at the top of this paper). A complete “SenseMaking” environment is shown in Figure 1.